Using Whisper AI Locally to Transcribe 2-Hour Voice Memos Completely Offline

Using Whisper AI Locally to Transcribe 2-Hour Voice Memos Completely Offline

Long voice notes often include useful information such as meetings, lectures, interviews, or brainstorming sessions; nevertheless, manually transcribing them into text is a time-consuming and wasteful process. In most cases, traditional transcription services are dependent on cloud processing, which creates issues about privacy and puts constraints on file size, use quotas, and dependence on the internet. When it is performed locally, Whisper AI offers a strong alternative that enables users to transcribe even audio recordings that are two hours long without the need for an internet connection altogether. The adoption of this method guarantees that sensitive recordings will never be removed from the user’s device, while also providing a speech-to-text conversion that is very accurate. Whisper is capable of processing huge audio files in an effective manner and producing structured transcripts that are appropriate for use in the development of content, documentation, or notes when it is properly configured. Because of this, it is an indispensable instrument for professionals, students, and researchers who deal with lengthy audio recordings. Realising the full potential of Whisper AI for offline transcription processes requires first gaining an understanding of how to customise and optimise the local version of the software.

An Understanding of the Ways in Which Whisper AI Handles Speech Recognition

A voice recognition model called Whisper AI was developed with the intention of converting spoken language into written text via the use of deep learning methods. This is because it is trained on a broad range of noisy and multilingual audio datasets, which enables it to manage a variety of accents, situations, and speaking styles. During the processing of audio, the model divides speech into smaller parts and examines phonetic patterns in order to provide transcriptions that are correct. It is more flexible and resilient than conventional systems since it does not depend largely on predetermined language rules. This is in contrast to traditional systems. When a user runs Whisper locally, all computing takes place on the user’s own system, which eliminates the need for hosting the software on other servers. This provides users with the ability to handle sensitive audio without the danger of their data being exposed, so ensuring total privacy. Given that it is capable of handling long-form audio, it is especially handy for voice memos that are exceptionally lengthy.

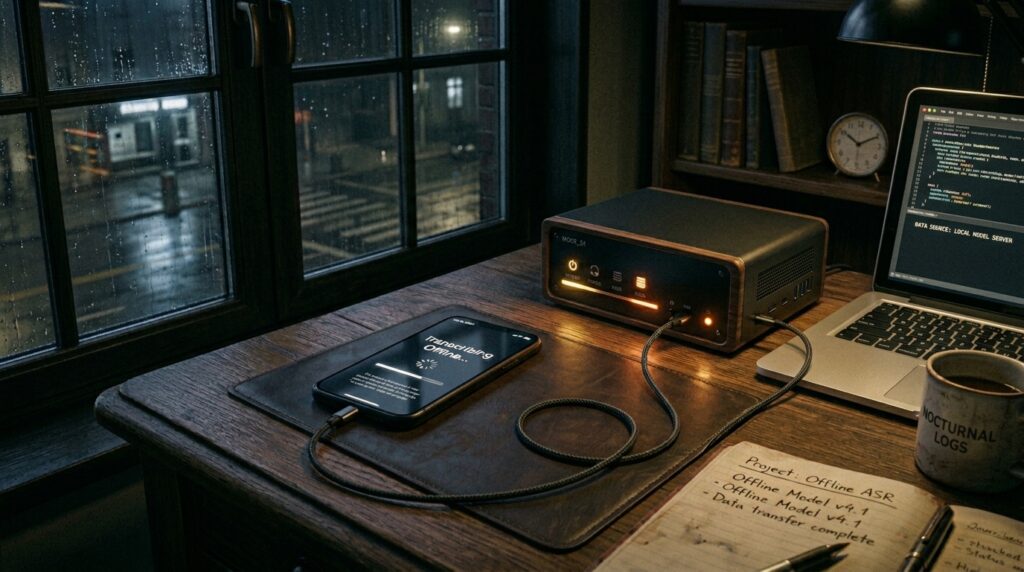

The process of preparing Whisper AI for use offline

It is necessary for users to install the model and all of its dependencies on their machine before they may utilise Whisper AI locally. The process normally entails the establishment of a Python environment and the installation of necessary libraries that are capable of supporting audio decoding and model execution. Downloading the model files and storing them locally for offline access occurs once the installation has been completed. Depending on the hardware of the system, customers have the option of selecting models that are either smaller and quicker or bigger and more precise. GPU acceleration has the potential to greatly boost processing performance, particularly for lengthy audio files such as recordings that are two hours long. It is essential that the system be installed correctly in order to guarantee that it is capable of handling uninterrupted transcribing. Whisper may be performed by command-line tools or integrated scripts after it has been setup. For processes that include offline transcribing, this configuration serves as the basis.

In order to get optimal processing, preparing lengthy voice memos

To guarantee that the processing goes well, it is essential to prepare the audio files before beginning the transcribing process. If you want to prevent compatibility concerns, you should make sure that large voice memos are saved in supported formats like MP3 or WAV. It is possible to dramatically increase transcription accuracy by reducing background noise via the use of simple audio cleaning techniques. Even though Whisper is resistant to noise, the results they create are still superior when the input is cleaner. Additionally, it is necessary to make certain that the audio is appropriately normalised in loudness in order to prevent detecting errors. When dealing with very lengthy recordings, dividing files into smaller chunks might be an effective way to increase processing stability. In addition to lowering the likelihood of mistakes occurring, proper preparation guarantees that the model will function well during the whole of the recording.

Effectively Transcribing Audio Files That Are Two Hours Long

It is necessary to use caution while managing system resources when processing lengthy audio files. Extended recordings may be handled by Whisper AI; however, performance is dependent on the capabilities of the hardware and the size of the model. It is recommended to use medium or large models for recording two-hour voice memos since they give superior accuracy, while smaller models provide quicker processing. The process of transcription comprises analysis of audio segments in a sequential manner and the generation of written outputs that correspond to those segments. It is possible that this will take longer than the actual length of the audio, depending on how well the system is performing. Making ensuring that the procedure is carried out in a consistent setting guarantees that transcribing will not be disrupted. When everything is finished, the result is normally delivered as a full text document that has parts that are aligned with time. The evaluation and extraction of pertinent information is simplified as a result of this.

Accuracy Improvements Made with Regards to Language and Context Settings

Whisper AI gives customers the ability to pick their preferred language, which makes it possible to dramatically enhance the accuracy of transcription. The model concentrates on relevant linguistic patterns when the language is specified, which helps to reduce the number of mistakes that occur in interpretation. Additionally, context is a significant factor in the enhancement of findings, particularly for recordings that are exclusive to a certain subject, such as academic lectures or technical talks. Despite the fact that Whisper does not need any manual training, it is nevertheless possible to modify outcomes by offering contextual explanations. An further improvement in accuracy is achieved with clear voice input, especially in lengthy recordings. It is possible for users to create very reliable transcriptions by combining language parameters with clean audio input. The implementation of these optimisations guarantees that even the most complicated recordings lasting two hours are transformed into text that is legible.

The Management of Large Output Files and the Organization of Transcripts

Speech memos that are lengthy often produce enormous amounts of text outputs that need to be organised in order to be used. By dividing transcripts into portions that are organised according to timestamps or subject shifts, it becomes much simpler to go through them. Whisper is capable of producing time-coded outputs, which may assist in determining the order in which certain portions occur within the audio. In situations when certain facts need to be accessed quickly, such as when evaluating meetings or interviews, this presents a very helpful opportunity. You may further adjust the layout of your document by using post-processing tools to add headers or divide the content into paragraphs. The organization of transcripts makes the material more actionable and increases the reading of the transcripts. In order to turn raw transcription output into a knowledge resource that can be used, proper organization is required.

Improvements Made to the Performance of Local Hardware

Locally running Whisper AI may be resource-intensive, particularly when dealing with huge audio recordings and models that need a high level of accuracy. In order to maximise the performance of the system, it is necessary to choose the appropriate model size depending on the amount of available CPU or GPU capacity. There is a substantial reduction in processing time when GPU acceleration is used, and it is suggested for longer recordings. The management of memory is another factor that contributes to the smooth execution of a program without any hiccups or slowdowns. In order to enhance performance and free up system resources, closing apps that are not essential might be beneficial. In the absence of sufficient optimisation of the system, it is recommended that batch processing of many files be avoided. Users are able to strike a balance between speed and precision that is suitable for their workflow by changing the performance parameters appropriately.

Utilisation of Offline Transcription on a Continuous Basis Scaling

As soon as Whisper AI has been correctly setup, it is possible to include it into a process that involves continuous transcribing. It is possible for automated scripts to keep an eye out for fresh voice memos in folders and then automatically process them. Users are able to transform recordings into text without the need for any human intervention being required. This results in the creation of a transcribing system that is completely offline and functions without any interruptions in the background over time. One group of people who might benefit greatly from such a configuration is professionals who often record meetings or thoughts. In order to guarantee that transcribing is not a distinct operation but rather a natural element of the process, scaling the system is necessary. When used on a regular basis, offline Whisper AI transforms into a very effective productivity tool that allows for the efficient management of long-form audio recordings.