How to Secure Your Autonomous Agents Against “Prompt Injection” Attacks

How to Secure Your Autonomous Agents Against “Prompt Injection” Attacks

In the year 2026, autonomous artificial intelligence agents are becoming an essential component of company operations, handling anything from contacts with customers to intricate processes that include many steps. On the other hand, as these agents increase their level of autonomy and their capacity for decision-making, they also become potential targets for assaults known as “prompt injection.” The term “prompt injection” refers to the process by which an adversary controls the input or instructions that are sent to an artificial intelligence agent in order to change the behavior of the agent or obtain unauthorized access to systems and data. Data breaches, workflows that are damaged, or activities that are carried out by the agent that are dangerous might all be the result of these assaults. In order to keep autonomous systems secure and dependable, it is necessary to have a thorough understanding of the risks, to put in place solid protections, and to monitor the behavior of agents. protecting artificial intelligence agents is just as important for contemporary businesses as protecting conventional information technology structures.

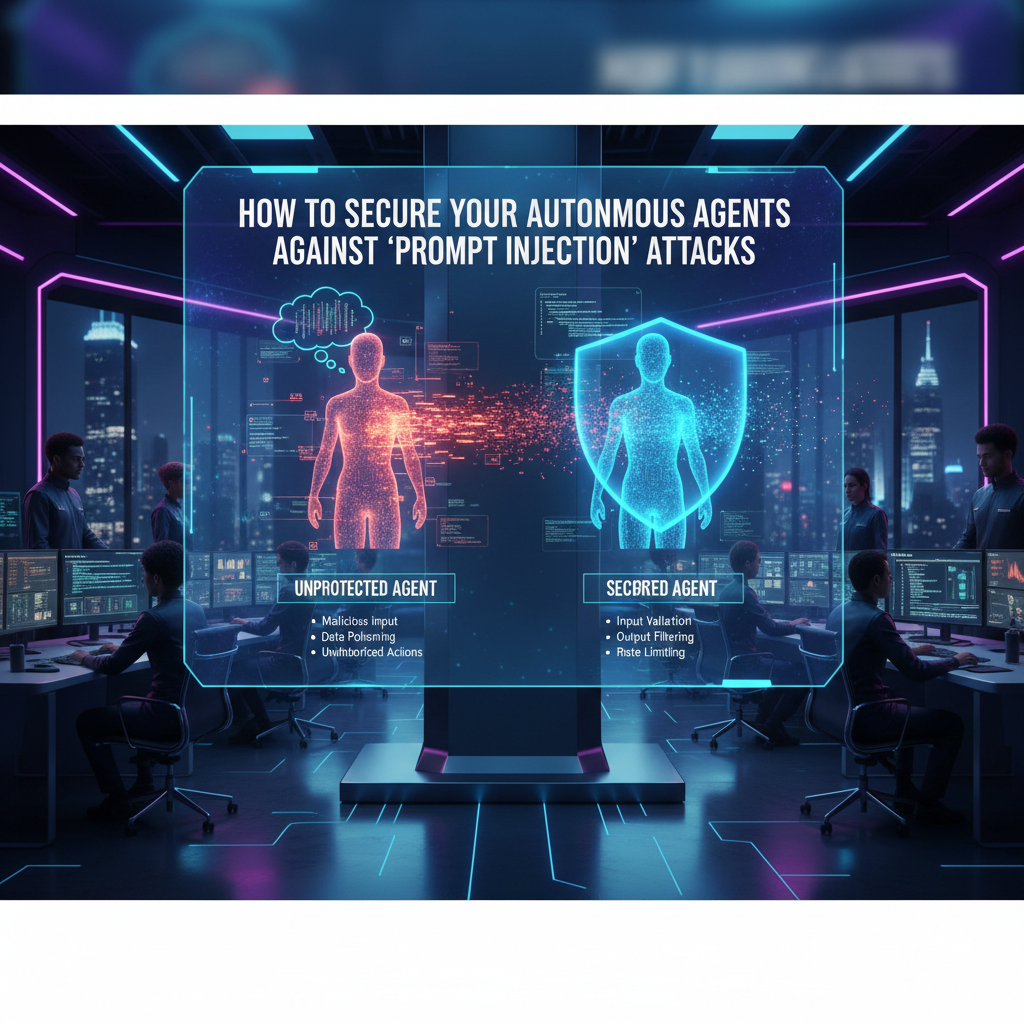

Familiarizing Oneself with Prompt Injection

When malevolent or external inputs are meant to override the instructions that an artificial intelligence agent is supposed to follow, this is known as prompt injection. These kind of assaults, in contrast to more conventional software vulnerabilities, are designed to affect the decision-making logic of the agent by including hidden commands or misleading instructions into the inputs. Without being aware of it, the agent could carry out activities that were never approved. To begin the process of designing robust defenses, the first step is to have an understanding of the mechanics of rapid injection. In the year 2026, it is very necessary for anybody who is implementing autonomous systems to be aware of potential attack vectors.

Recognition of Workflows That Are Vulnerable

It is possible for prompt injection to occur in some agent processes more often than in others. There is a significant level of danger associated with agents that handle user input that has not been screened, receive instructions from numerous sources, or create actions without validation. The effect of an injection may be amplified by complex multi-agent systems that include activities that are depending on one another. In order to prioritize the protection of these susceptible sites, security assessments should detect potential vulnerabilities. By the year 2026, vulnerability mapping has become an integral part of the process of deploying AI tactics.

Performing Sanitization and Validation on Input

When it comes to protecting against prompt injection, strong input validation is one of the most effective options. Sanitization and verification of the required formats, permissible commands, and reliable sources should be performed on any and all instructions, messages, or data that are fed into an artificial intelligence agent. Any inputs that are either unexpected or malformed need to be refused or notified. It is because of this that harmful instructions are prevented from affecting the reasoning process of the agent. In the year 2026, input validation is seen as an essential safety measure for autonomous artificial intelligence systems.

The Control of Access Based on Roles

When the capabilities of an AI agent are restricted according to their function or the context, the effect of inserted cues is diminished. An example of this would be a customer service representative that handles queries from customers which should not have access to critical corporate databases or financial systems. The breadth of the potential harm is limited by role-based permissions, which guarantee that even if a malicious input is accepted, the impact will be limited. Fine-grained access control is an essential component of artificial intelligence security architecture in the year 2026.

Monitoring of Behavior and Contextual Information

Monitoring the behavior of agents in real time allows for the detection of abnormalities that are brought on by quick injection. It is possible to automatically identify agents that abruptly diverge from the processes that are expected of them, try operations that are not permitted, or provide outputs that are inconsistent. The use of behavioral analytics assists in the detection of harmful operations prior to their spread across the system. To ensure the safety of AI agents in the year 2026, continual behavioral monitoring will be an essential line of defense.

Increased Intensity and Human Supervision

An oversight system that includes a person in the loop is beneficial even for highly autonomous systems. Escalation procedures should be able to reroute the work to a human operator in the event that an agent is confronted with inputs that are either unexpected or unclear. This not only stops agents from carrying out orders that may possibly do damage, but it also affords them the chance to examine the likely origin of the injection. By the year 2026, human monitoring will continue to be an essential safety layer in the implementation of secure AI.

Auditing and Performing Updates on Agent Logic

In order to mitigate vulnerabilities, it is helpful to do routine updates on the agent logic, prompt rules, and workflow settings. When conducting security audits, it is important to include stress tests and simulated injection attempts in order to identify vulnerabilities. A further benefit of auditing is that it guarantees that agents adhere to both internal policies and external regulatory norms. Continuous auditing is seen as a preventative security strategy rather than a reactive repair in the year 2026.

Integrity of Data and Encryption Measures

In order to prevent data from being intercepted or altered by attackers, it is necessary to ensure that inputs and outputs are transmitted in a secure manner. Protecting the content as well as the instructions that are given to agents is accomplished through the use of encryption, digital signatures, and integrity checks. This provides an additional layer of defense against injection attacks, which consist of manipulating communication channels in order to gain access to sensitive information. In the year 2026, cryptographic safeguards of high-value autonomous systems are considered to be the norm.

Providing Training for Agents of Resilience

The ability to recognize suspicious patterns in input or unusual instruction structures can be taught to agents for training purposes. When agents are provided with adversarial training, they are better able to recognize and disregard potentially malicious commands. It is important to note that this does not take the place of conventional security measures; however, it does add an adaptive defense mechanism that evolves alongside new threats. In the year 2026, resilient agent design is employed, which integrates proactive intelligence with standard security protocols.

Developing a Safe and Self-Reliant Artificial Intelligence Ecosystem

A multi-layered approach is required in order to protect autonomous agents from prompt injection. This approach includes input validation, role-based access, monitoring, human oversight, auditing, encryption, and resilience training. These precautions, when taken together, guarantee that artificial intelligence agents will function dependably and safely, even in hostile environments. It is essential to implement stringent security practices in order to safeguard both operations and sensitive data as the use of autonomous systems continues to spread across every industry. In the year 2026, one of the most fundamental requirements for trust, efficiency, and scalability in modern businesses is the presence of AI agents that are both safe and secure.